RAG Isn't Solving Your Retrieval Problem — It's Hiding It

RAG has quietly become the default architectural reflex for any AI product that needs to work with proprietary data. Someone asks "how do we get the LLM to know about our docs?" and within two sprints, there's an embedding pipeline, a vector database, and a chunking strategy under review. The problem isn't that RAG is wrong — it's that most teams implement it before they've diagnosed what's actually broken.

The distinction matters because RAG is expensive to build correctly, brittle in ways that don't surface until production, and frequently overkill for the actual problem at hand. Before you commit to the infrastructure, it's worth asking a harder question: is your problem that the LLM lacks knowledge, or that your retrieval layer isn't surfacing the right context?

Two Different Problems That Look Identical on the Surface

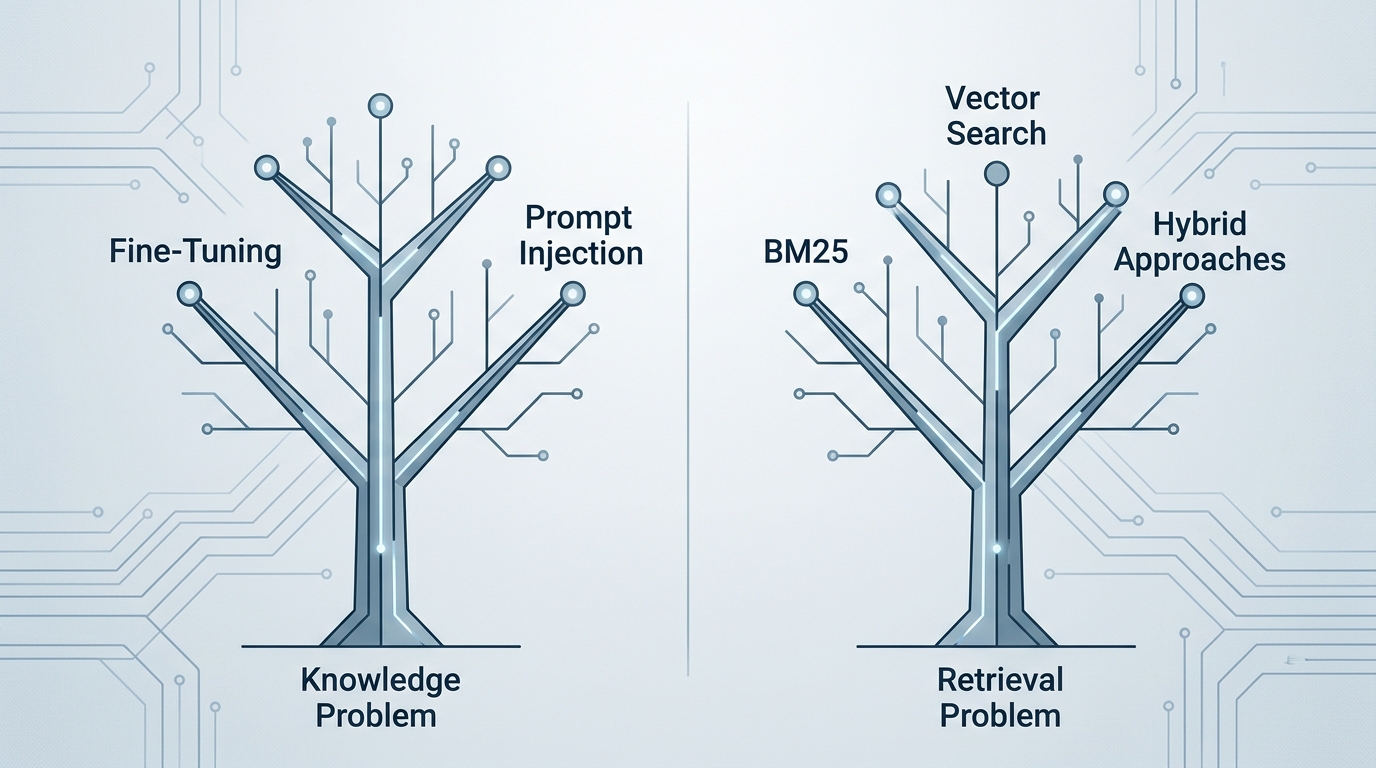

There's a conflation that happens early in most AI product conversations: "we need the LLM to know about X" gets treated as equivalent to "we need to retrieve the right context for X." These are architecturally different problems with different solutions.

The knowledge problem is real but narrow. It applies when the LLM genuinely has no exposure to your domain — your internal product nomenclature, proprietary processes, or data created after the model's training cutoff. Fine-tuning addresses this, though it's expensive and inflexible. Injecting context via prompt engineering addresses it for smaller knowledge sets.

The retrieval problem is about finding the right chunk of information at inference time. This is where RAG lives. But retrieval quality is not a uniquely AI problem — it's the same problem information retrieval systems have been solving since the 1970s. BM25, TF-IDF, faceted search, and rule-based ranking are mature, well-understood tools. The question isn't whether RAG can solve retrieval — it's whether semantic vector search is the right retrieval mechanism for your specific query patterns.

When Traditional Search Outperforms RAG

RAG's semantic search capability is genuinely useful when queries are fuzzy, conceptual, or phrased differently from how documents are written. But that's a narrower set of use cases than it seems. Here are three categories where deterministic retrieval consistently wins:

E-commerce and structured product catalogs. Product search has well-defined attributes — SKU, category, price range, availability, compatibility. A user searching for "waterproof hiking boots under $150 in size 11" is expressing a set of filters, not a semantic concept. Vector search on product embeddings will find boots that are conceptually similar to the query, which is not what the user wants. Faceted search with BM25 for keyword matching and hard filters for attributes is faster, cheaper, and more predictable. Semantic search adds value only at the edges — handling misspellings, synonym resolution, or intent disambiguation — and even then, a well-tuned synonym dictionary often handles this adequately.

Support ticket routing. Most support systems have a finite taxonomy of issue types. A ticket saying "my invoice didn't download" needs to route to billing, not to be semantically matched against a vector store of past tickets. Rule-based classifiers or even simple keyword matching with a small intent classification model handle this reliably. RAG introduces latency and cost with no meaningful accuracy improvement when the label space is well-defined.

Internal knowledge bases with stable schemas. If your company's HR policies, engineering runbooks, or compliance docs have consistent structure and change on a quarterly cycle, a well-indexed document store with BM25 search and metadata filtering will outperform a RAG pipeline on precision. The reason: RAG's chunking strategy almost always loses structural context. A policy document with numbered sections gets split mid-paragraph, and the embedding of "Section 4.2 — Reimbursement Limits" loses the hierarchical context that makes it answerable. A traditional search system that preserves document structure and returns full sections is more reliable here.

When RAG earns its complexity

- Queries are conceptual or fuzzy ("what's our approach to incident response?")

- Document corpus is large, unstructured, and changes frequently

- Users phrase questions differently than documents are written

- Cross-document synthesis is required to answer a question

When traditional search wins

- Queries map to structured attributes or filters

- Document schema is stable and well-organized

- Routing or classification into a known taxonomy

- Latency and cost constraints are tight

The Hidden Costs That Don't Show Up in the Pitch

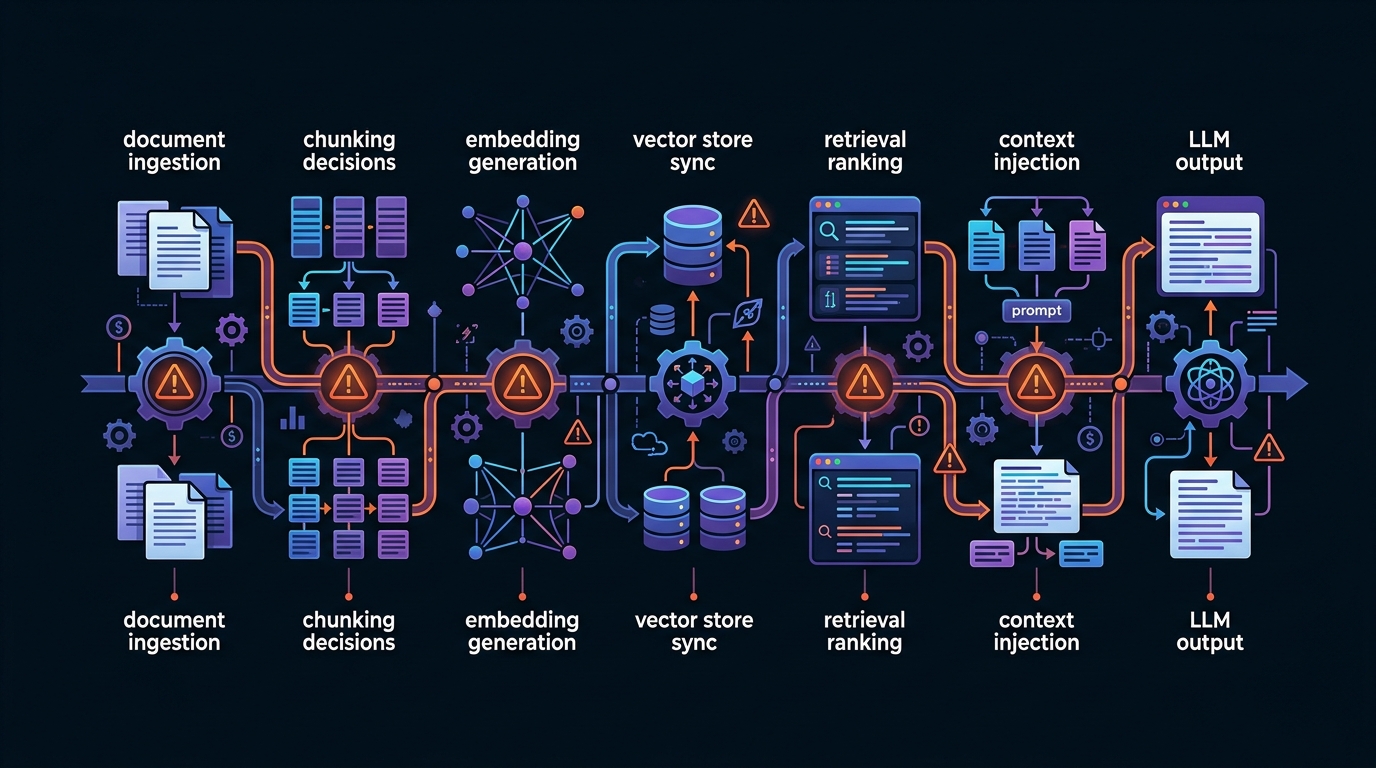

RAG implementations are almost universally scoped at 2–3x their actual cost. The embedding pipeline and vector database are the visible work. Here's what gets underestimated:

Embedding staleness. Every time a source document changes, the embeddings need to be regenerated. This sounds trivial until you have 50,000 documents with a partial update cycle — some docs updated daily, others yearly. Without a robust change-detection and re-embedding pipeline, your vector store drifts out of sync with your source of truth. Teams routinely discover this six months post-launch when users start getting answers based on outdated policy text.

Chunking brittleness. There is no universally correct chunking strategy. Fixed-size chunks lose semantic boundaries. Sentence-level chunks lose context. Paragraph-level chunks vary wildly in information density. The "right" chunk size depends on your document type, query patterns, and embedding model — and it requires empirical testing to tune. A poorly chunked corpus will consistently retrieve adjacent-but-wrong content, which the LLM will then confidently synthesize into a plausible-sounding wrong answer.

Hallucinated citations. RAG reduces hallucination rates, but it doesn't eliminate them. Research on legal AI applications has found hallucination rates of 17–33% even in RAG-augmented systems — the LLM retrieves a real document and then paraphrases it inaccurately, or worse, cites a document that doesn't support the claim it's making. Users trust RAG outputs more because they see source citations, which makes these failures harder to catch and more damaging when they surface.

Eval burden. Validating retrieval quality requires a labeled evaluation set — query/expected-document pairs — that most teams don't have and have to build from scratch. Without this, you're flying blind on whether your retrieval layer is actually working. Building a meaningful eval set for a mid-sized knowledge base typically takes 2–4 weeks of domain expert time. This almost never appears in initial project scopes.

A Decision Framework for When to Actually Use RAG

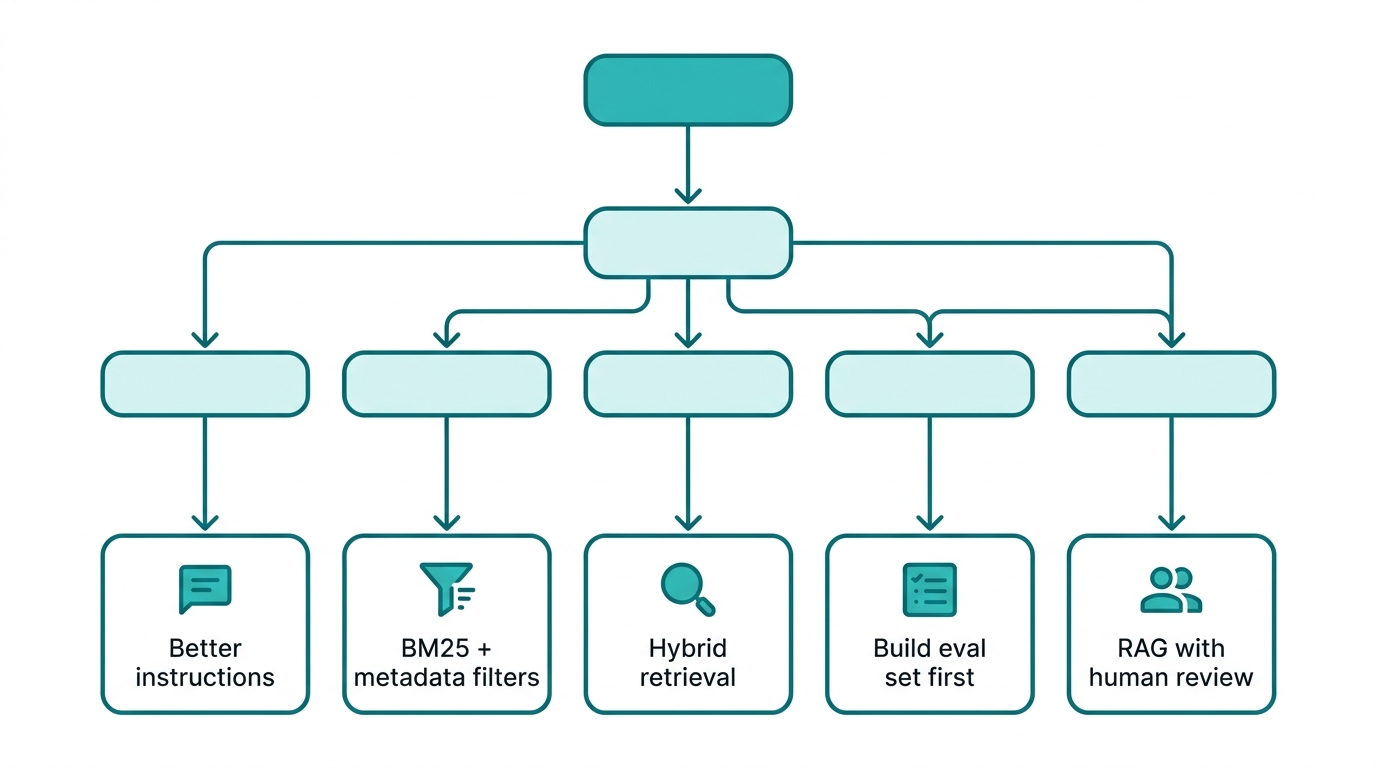

Before committing to RAG infrastructure, run through this sequence:

Step 1: Can you solve this with better instructions? Take your worst-performing queries. Write the ideal answer. Now write a system prompt that, combined with the relevant document pasted directly into context, produces that answer. If this works, your problem is instruction quality, not retrieval. Fix the prompt first.

Step 2: Does your query pattern require semantic matching? Log 50–100 real user queries. Categorize them: structured/filterable, keyword-matchable, or genuinely semantic/conceptual. If fewer than 30% require semantic understanding, BM25 with good metadata filtering will handle the majority of your traffic better than a vector search pipeline.

Step 3: What's your document change frequency? If documents update daily or in real-time, you need a re-embedding pipeline robust enough to keep pace. Price this out before building. If documents are largely static with quarterly updates, the staleness problem is manageable. If they're somewhere in between, hybrid approaches — keeping a traditional search index as the primary retrieval layer with semantic re-ranking as a second pass — often work better than pure RAG.

Step 4: Do you have an eval set? If you can't measure retrieval quality, you can't improve it. Before building the retrieval system, commit to building the evaluation set. If domain experts aren't available to label query/document pairs, that's a signal the project isn't ready for RAG yet.

Step 5: What's the cost of a wrong answer? In low-stakes contexts (content recommendations, internal tooling), RAG's failure modes are acceptable. In high-stakes contexts (compliance, legal, medical, financial), the hallucinated-citation failure mode is dangerous enough to warrant either deterministic retrieval with exact-match sourcing, or a human review step that changes the economics of the entire system.

What does a hybrid retrieval approach actually look like?

A hybrid retrieval system uses a fast, deterministic first-pass retrieval (BM25 or metadata filtering) to narrow the candidate set, then applies a semantic re-ranking model as a second pass to reorder results by conceptual relevance before injecting them into the LLM context.

This approach has a few practical advantages:

- The first-pass index is cheap to update incrementally — no re-embedding required when a document changes, just re-indexing

- The semantic re-ranker operates on a small candidate set (top 20–50 results), so latency is manageable

- You can tune each layer independently — improving keyword recall without touching the semantic layer, or upgrading the re-ranker without rebuilding the index

Tools like Cohere Rerank and Jina Reranker make the re-ranking layer relatively easy to add to an existing search stack. This is often the right architecture for teams with existing Elasticsearch or OpenSearch infrastructure who want to add semantic capability without a full RAG rebuild.

How to Test the Hypothesis Before Building

The fastest way to validate whether your problem is retrieval quality or something else is a manual simulation test — sometimes called a "retrieval oracle" test.

Take 20 representative queries from your target use case. Manually find the ideal source document or passage for each one. Paste that ideal context directly into an LLM prompt and ask the query. If response quality is good, your retrieval layer is the bottleneck — and now you have a quality ceiling to optimize toward. If response quality is still poor with perfect context, you have a reasoning or instruction problem that RAG will not fix.

This test takes a few hours and can save months of engineering work. The number of teams that skip it and go straight to building a vector pipeline is, based on patterns in the field, most of them.

The Actual Question RAG Answers

RAG is a good answer to a specific question: "How do I give an LLM access to a large, unstructured, frequently-queried corpus where queries are semantically varied and can't be anticipated in advance?" That's a real problem. It's also a narrower problem than "we need the LLM to know things."

The teams that get the most out of RAG are the ones who exhausted simpler options first — who tried prompt engineering, tried BM25, tried metadata filtering — and found those approaches genuinely insufficient for their query patterns. They built RAG with a clear eval set, a chunking strategy validated against real queries, and a re-embedding pipeline scoped into the initial build.

The teams that struggle with RAG are the ones who reached for it as a default, discovered the hidden costs six months in, and are now maintaining a complex system that a well-tuned Elasticsearch index would have handled adequately.

The reflex to "just use RAG" is understandable — the demos are impressive, the tooling has matured, and it feels like the modern answer. But modern doesn't mean appropriate. Diagnose the problem before prescribing the architecture.